torchbox.ml package

Submodules

torchbox.ml.evolutionary module

- class torchbox.ml.evolutionary.GeneticAlgorithm(pop_size: int = 50, n_gen: int = 100, crossover_prob: float = 0.8, mutate_prob: float = 0.1, elitism: bool = True, elitism_num: int = 2, device: str = 'cpu')

Bases:

ABCPyTorch 实现的通用可扩展遗传算法基类 只需重写: initialize_population, crossover, mutate, selection 四个方法 主进化流程通用,支持 GPU 加速

- abstractmethod crossover(parent1: Tensor, parent2: Tensor) Tensor

【必须重写】交叉操作 输入: 两个父代个体 (chromosome_length,) 返回: 子代个体 (chromosome_length,)

- evaluate_fitness(population: Tensor) Tensor

【可选重写】适应度函数(默认需要用户重写,也可内置) 输入: 种群张量 [pop_size, chrom_len] 返回: 适应度张量 [pop_size] (值越大表示个体越优)

- abstractmethod initialize_population() Tensor

【必须重写】种群初始化 返回: 种群张量 (shape: [pop_size, chromosome_length])

torchbox.ml.reduction_pca module

- torchbox.ml.reduction_pca.pca(x, sdim=-2, fdim=-1, npcs='all', eigbkd='svd')

Principal Component Analysis (pca) on raw data

- Parameters:

x (Tensor) – the input data

sdim (int, optional) – the dimension index of sample, by default -2

fdim (int, optional) – the dimension index of feature, by default -1

npcs (int or str, optional) – the number of components, by default

'all'eigbkd (str, optional) – the backend of eigen decomposition,

'svd'(default) or'eig'

- Returns:

U, S, K (if

npcsis integer)- Return type:

tensor

Examples

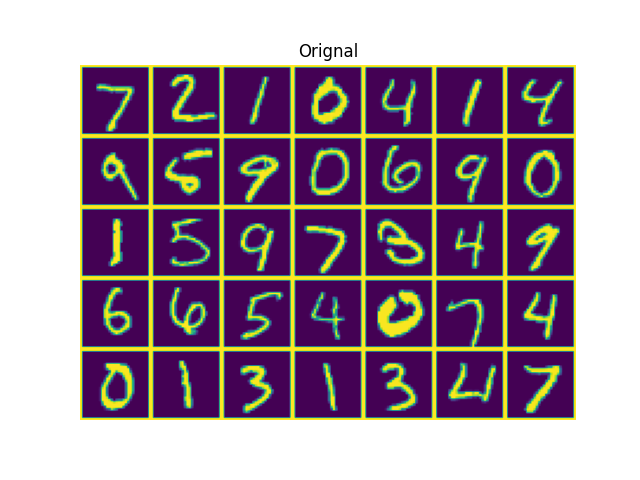

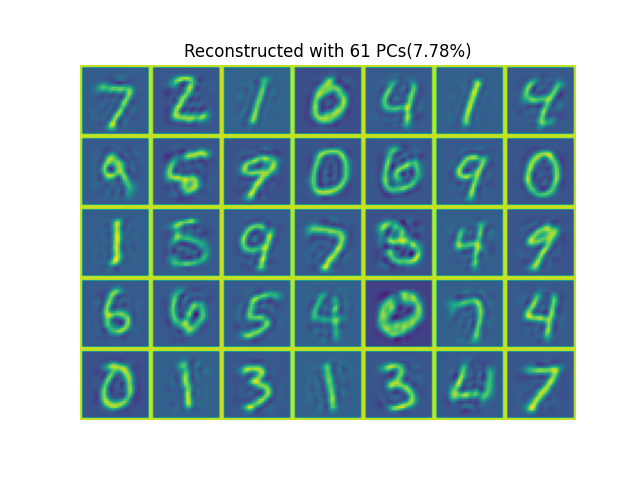

The results shown in the above figure can be obtained by the following codes.

rootdir, dataset = '/mnt/d/DataSets/oi/dgi/mnist/official/', 'test' x, _ = tb.read_mnist(rootdir=rootdir, dataset=dataset, fmt='ubyte') print(x.shape) N, M2, _ = x.shape x = x.to(th.float32) pcr = 0.9 u, s = tb.pca(x, sdim=0, fdim=(1, 2), eigbkd='svd') k = tb.pcapc(s, pcr=pcr) print(u.shape, s.shape, k) u = u[..., :k] y = x.reshape(N, -1) @ u # N-k z = y @ u.T.conj() # z[z<0] = 0 z = z.reshape(N, M2, M2) print(tb.nmse(x, z, dim=(1, 2))) xp = th.nn.functional.pad(x[:35], (1, 1, 1, 1, 0, 0), 'constant', 255) zp = th.nn.functional.pad(z[:35], (1, 1, 1, 1, 0, 0), 'constant', 255) plt = tb.imshow(tb.patch2tensor(xp, (5*(M2+2), 7*(M2+2)), dim=(1, 2)), titles=['Orignal']) plt = tb.imshow(tb.patch2tensor(zp, (5*(M2+2), 7*(M2+2)), dim=(1, 2)), titles=['Reconstructed with %d PCs(%.2f%%)' % (k, 100*k/u.shape[0])]) plt.show() u, s = tb.pca(x.reshape(N, -1), sdim=0, fdim=1, npcs=2, eigbkd='svd') print(u.shape, s.shape) y = x.reshape(N, -1) @ u # N-k z = y @ u.T.conj() z = z.reshape(N, M2, M2) print(tb.nmse(x, z, dim=(1, 2)))

- torchbox.ml.reduction_pca.pcapc(s, pcr=0.9)

get principal component according to the ratio of variance

- Parameters:

s (Tensor) – eigenvalues

pcr (float, optional) – the ratio of variance, by default 0.9

- torchbox.ml.reduction_pca.pcat(x, sdim=-2, fdim=-1, isnorm=True, eigbkd='svd')

gets Principal Component Analysis transformation

- Parameters:

x (Tensor) – the input data

sdim (int, optional) – the dimension index of sample, by default -2

fdim (int or tuple, optional) – the dimension index of feature, by default -1

isnorm (bool, optional) – whether to normalize covariance matric with the number of samples, by default True

eigbkd (str, optional) – the backend of eigen decomposition,

'svd'(default) or'eig'

- Returns:

the PCA transformation matrix, the eigenvalues

- Return type:

tensor